Navigating generative AI

Generative Artificial Intelligence (Gen AI) is a form of artificial intelligence capable of writing, drawing, composing music and designing products-all driven by user prompts.

Tools such as ChatGPT, Microsoft Copilot and Google Gemini are gaining popularity and are being adopted across various industries and businesses.

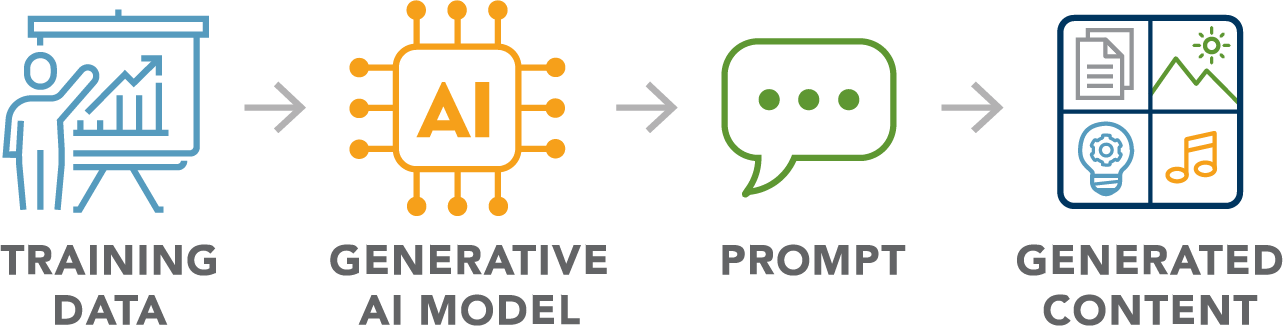

How Gen AI works

Large datasets are used to feed and train the model, allowing Gen AI to learn patterns and relationships. The prompt serves as your instruction or question. Subsequently, Gen AI produces text, images, music or designs—delivering content for the answer you requested.

How is Gen AI used in the workplace?

Today, Gen AI is becoming increasingly useful, and it’s transforming industries.

Healthcare

Personalized treatments and drug discovery

Finance

Market predictions and fraud detection

Manufacturing

Quality control, predictive maintenance, and design optimization

Any business that manages data can gain advantages from Gen AI. It streamlines processes, increases productivity and fosters innovation. With many breakthroughs already achieved in Gen AI technology, more advancements are expected soon.

However, while Gen AI offers impressive capabilities, it is important to recognize potential concerns and risks.

Understanding the risks

Gen AI is impressive, but not flawless. There can be risks that need to be considered, such as:

Unreliable content

Gen AI can “hallucinate” facts or cite fake or outdated sources. Gen AI doesn’t know facts. It makes predictions based on patterns in its training data, so it cannot verify what it says.

Bias and discrimination

It may reinforce stereotypes or skew results. This can stem from learning biased patterns from its training data.

Privacy and security

Deepfakes, voice scams and phishing are real threats that are becoming more sophisticated and difficult to identify their authenticity. All forms of AI are data-driven and may be used by “bad actors” for misuse.

Ultimately, these risks will impact Gen AI users, and if not addressed, could infiltrate everyday tools and shape people's thoughts.

Regulatory landscape

As of 2025, the United States still lacks a comprehensive federal law covering all aspects of AI. The latest indications suggest that Federal regulations, if any, will prioritize innovation over excessive rules.

However, at the state level, it's a different story. Some states have enacted AI-related laws, many of which focus on data privacy, transparency and bias prevention. Although there isn't a single global AI law yet, numerous countries are working toward one. The European Union is leading with the EU AI Act, the world’s first comprehensive AI regulation that categorizes AI systems by risk and sets different requirements for each.

Although AI legislation is advancing, it’s likely to lag behind the pace of AI technology itself. Whether you’re developing, using, or simply interested in AI, staying informed is essential.

Mitigating AI risks

Security and privacy

Security and privacy are vital, and the most up-to-date and relevant safety measures must be in place for organizations using Gen AI in their workplaces.

- Encrypt everything – data in storage and in transit.

- Limit access – only authorized people should have allowances and access to certain data.

- Consider an Enterprise Data Protection (EDP) contract with Gen AI tools as an option, such as Microsoft Copilot. An EDP helps protect your company’s data, keeps it private, and prevents it from being used to train AI foundation models.

To help navigate Gen AI risks, tools like the AI Risk Management Framework for Gen AI from the National Institute of Standards and Technology (NIST AI 600-1) can serve as a playbook for managing Gen AI risk.

Lastly, to successfully navigate the risks of using Gen AI in your organization, ensure full transparency and accountability, and make sure all outputs from any Gen AI tool are verified for accuracy and correctness.

Governance

To mitigate the occurrence of unreliable content, biased and discriminatory practices, and to ensure data privacy and security, organizations need solid governance.

Some best practices to consider:

- Stay updated: Make sure to be vigilant about relevant AI laws and compliance

- Verify outputs: Be aware that Gen AI tools have limitations, so do your due diligence

- Train employees properly: Teach those in your organization about the ethical, safe use of AI, including company policies.

Gen AI should not be used for:

- Handling private or sensitive information.

- Making final legal, financial or medical decisions.

- Reviewing official policy or contracts – it might miss some legal nuances or introduce the wrong context.

- Performing employee background checks, which may result in unfair or discriminatory outcomes.

- Determining compliance or regulatory requirements may misinterpret the context, provide outdated information or include other inaccuracies.

Summary

Gen AI is rapidly transforming workplaces and beyond, offering efficiency, creativity and convenience. However, it carries risks throughout its lifecycle—from training to deployment. While federal regulations lag, organizations should consider managing these risks through diligence, adequate risk management controls and ongoing monitoring.

When navigated responsibly, Gen AI can be a significant positive game-changer.

Sources

National Cyber Security Centre

National Institute of Standards and Technology-1

Related resources

Navigating generative AI

Generative Artificial Intelligence (Gen AI) is a form of artificial intelligence capable of writing, drawing, composing music and designing products-all driven by user prompts.

Tools such as ChatGPT, Microsoft Copilot and Google Gemini are gaining popularity and are being adopted across various industries and businesses.

How Gen AI works

Large datasets are used to feed and train the model, allowing Gen AI to learn patterns and relationships. The prompt serves as your instruction or question. Subsequently, Gen AI produces text, images, music or designs—delivering content for the answer you requested.

How is Gen AI used in the workplace?

Today, Gen AI is becoming increasingly useful, and it’s transforming industries.

Healthcare

Personalized treatments and drug discovery

Finance

Market predictions and fraud detection

Manufacturing

Quality control, predictive maintenance, and design optimization

Any business that manages data can gain advantages from Gen AI. It streamlines processes, increases productivity and fosters innovation. With many breakthroughs already achieved in Gen AI technology, more advancements are expected soon.

However, while Gen AI offers impressive capabilities, it is important to recognize potential concerns and risks.

Understanding the risks

Gen AI is impressive, but not flawless. There can be risks that need to be considered, such as:

Unreliable content

Gen AI can “hallucinate” facts or cite fake or outdated sources. Gen AI doesn’t know facts. It makes predictions based on patterns in its training data, so it cannot verify what it says.

Bias and discrimination

It may reinforce stereotypes or skew results. This can stem from learning biased patterns from its training data.

Privacy and security

Deepfakes, voice scams and phishing are real threats that are becoming more sophisticated and difficult to identify their authenticity. All forms of AI are data-driven and may be used by “bad actors” for misuse.

Ultimately, these risks will impact Gen AI users, and if not addressed, could infiltrate everyday tools and shape people's thoughts.

Regulatory landscape

As of 2025, the United States still lacks a comprehensive federal law covering all aspects of AI. The latest indications suggest that Federal regulations, if any, will prioritize innovation over excessive rules.

However, at the state level, it's a different story. Some states have enacted AI-related laws, many of which focus on data privacy, transparency and bias prevention. Although there isn't a single global AI law yet, numerous countries are working toward one. The European Union is leading with the EU AI Act, the world’s first comprehensive AI regulation that categorizes AI systems by risk and sets different requirements for each.

Although AI legislation is advancing, it’s likely to lag behind the pace of AI technology itself. Whether you’re developing, using, or simply interested in AI, staying informed is essential.

Mitigating AI risks

Security and privacy

Security and privacy are vital, and the most up-to-date and relevant safety measures must be in place for organizations using Gen AI in their workplaces.

- Encrypt everything – data in storage and in transit.

- Limit access – only authorized people should have allowances and access to certain data.

- Consider an Enterprise Data Protection (EDP) contract with Gen AI tools as an option, such as Microsoft Copilot. An EDP helps protect your company’s data, keeps it private, and prevents it from being used to train AI foundation models.

To help navigate Gen AI risks, tools like the AI Risk Management Framework for Gen AI from the National Institute of Standards and Technology (NIST AI 600-1) can serve as a playbook for managing Gen AI risk.

Lastly, to successfully navigate the risks of using Gen AI in your organization, ensure full transparency and accountability, and make sure all outputs from any Gen AI tool are verified for accuracy and correctness.

Governance

To mitigate the occurrence of unreliable content, biased and discriminatory practices, and to ensure data privacy and security, organizations need solid governance.

Some best practices to consider:

- Stay updated: Make sure to be vigilant about relevant AI laws and compliance

- Verify outputs: Be aware that Gen AI tools have limitations, so do your due diligence

- Train employees properly: Teach those in your organization about the ethical, safe use of AI, including company policies.

Gen AI should not be used for:

- Handling private or sensitive information.

- Making final legal, financial or medical decisions.

- Reviewing official policy or contracts – it might miss some legal nuances or introduce the wrong context.

- Performing employee background checks, which may result in unfair or discriminatory outcomes.

- Determining compliance or regulatory requirements may misinterpret the context, provide outdated information or include other inaccuracies.

Summary

Gen AI is rapidly transforming workplaces and beyond, offering efficiency, creativity and convenience. However, it carries risks throughout its lifecycle—from training to deployment. While federal regulations lag, organizations should consider managing these risks through diligence, adequate risk management controls and ongoing monitoring.

When navigated responsibly, Gen AI can be a significant positive game-changer.

Sources

National Cyber Security Centre

National Institute of Standards and Technology-1

Related resources

Navigating generative AI

Generative Artificial Intelligence (Gen AI) is a form of artificial intelligence capable of writing, drawing, composing music and designing products-all driven by user prompts.

Tools such as ChatGPT, Microsoft Copilot and Google Gemini are gaining popularity and are being adopted across various industries and businesses.

How Gen AI works

Large datasets are used to feed and train the model, allowing Gen AI to learn patterns and relationships. The prompt serves as your instruction or question. Subsequently, Gen AI produces text, images, music or designs—delivering content for the answer you requested.

How is Gen AI used in the workplace?

Today, Gen AI is becoming increasingly useful, and it’s transforming industries.

Healthcare

Personalized treatments and drug discovery

Finance

Market predictions and fraud detection

Manufacturing

Quality control, predictive maintenance, and design optimization

Any business that manages data can gain advantages from Gen AI. It streamlines processes, increases productivity and fosters innovation. With many breakthroughs already achieved in Gen AI technology, more advancements are expected soon.

However, while Gen AI offers impressive capabilities, it is important to recognize potential concerns and risks.

Understanding the risks

Gen AI is impressive, but not flawless. There can be risks that need to be considered, such as:

Unreliable content

Gen AI can “hallucinate” facts or cite fake or outdated sources. Gen AI doesn’t know facts. It makes predictions based on patterns in its training data, so it cannot verify what it says.

Bias and discrimination

It may reinforce stereotypes or skew results. This can stem from learning biased patterns from its training data.

Privacy and security

Deepfakes, voice scams and phishing are real threats that are becoming more sophisticated and difficult to identify their authenticity. All forms of AI are data-driven and may be used by “bad actors” for misuse.

Ultimately, these risks will impact Gen AI users, and if not addressed, could infiltrate everyday tools and shape people's thoughts.

Regulatory landscape

As of 2025, the United States still lacks a comprehensive federal law covering all aspects of AI. The latest indications suggest that Federal regulations, if any, will prioritize innovation over excessive rules.

However, at the state level, it's a different story. Some states have enacted AI-related laws, many of which focus on data privacy, transparency and bias prevention. Although there isn't a single global AI law yet, numerous countries are working toward one. The European Union is leading with the EU AI Act, the world’s first comprehensive AI regulation that categorizes AI systems by risk and sets different requirements for each.

Although AI legislation is advancing, it’s likely to lag behind the pace of AI technology itself. Whether you’re developing, using, or simply interested in AI, staying informed is essential.

Mitigating AI risks

Security and privacy

Security and privacy are vital, and the most up-to-date and relevant safety measures must be in place for organizations using Gen AI in their workplaces.

- Encrypt everything – data in storage and in transit.

- Limit access – only authorized people should have allowances and access to certain data.

- Consider an Enterprise Data Protection (EDP) contract with Gen AI tools as an option, such as Microsoft Copilot. An EDP helps protect your company’s data, keeps it private, and prevents it from being used to train AI foundation models.

To help navigate Gen AI risks, tools like the AI Risk Management Framework for Gen AI from the National Institute of Standards and Technology (NIST AI 600-1) can serve as a playbook for managing Gen AI risk.

Lastly, to successfully navigate the risks of using Gen AI in your organization, ensure full transparency and accountability, and make sure all outputs from any Gen AI tool are verified for accuracy and correctness.

Governance

To mitigate the occurrence of unreliable content, biased and discriminatory practices, and to ensure data privacy and security, organizations need solid governance.

Some best practices to consider:

- Stay updated: Make sure to be vigilant about relevant AI laws and compliance

- Verify outputs: Be aware that Gen AI tools have limitations, so do your due diligence

- Train employees properly: Teach those in your organization about the ethical, safe use of AI, including company policies.

Gen AI should not be used for:

- Handling private or sensitive information.

- Making final legal, financial or medical decisions.

- Reviewing official policy or contracts – it might miss some legal nuances or introduce the wrong context.

- Performing employee background checks, which may result in unfair or discriminatory outcomes.

- Determining compliance or regulatory requirements may misinterpret the context, provide outdated information or include other inaccuracies.

Summary

Gen AI is rapidly transforming workplaces and beyond, offering efficiency, creativity and convenience. However, it carries risks throughout its lifecycle—from training to deployment. While federal regulations lag, organizations should consider managing these risks through diligence, adequate risk management controls and ongoing monitoring.

When navigated responsibly, Gen AI can be a significant positive game-changer.

Sources

National Cyber Security Centre

National Institute of Standards and Technology-1

Related resources

Navigating generative AI

Generative Artificial Intelligence (Gen AI) is a form of artificial intelligence capable of writing, drawing, composing music and designing products-all driven by user prompts.

Tools such as ChatGPT, Microsoft Copilot and Google Gemini are gaining popularity and are being adopted across various industries and businesses.

How Gen AI works

Large datasets are used to feed and train the model, allowing Gen AI to learn patterns and relationships. The prompt serves as your instruction or question. Subsequently, Gen AI produces text, images, music or designs—delivering content for the answer you requested.

How is Gen AI used in the workplace?

Today, Gen AI is becoming increasingly useful, and it’s transforming industries.

Healthcare

Personalized treatments and drug discovery

Finance

Market predictions and fraud detection

Manufacturing

Quality control, predictive maintenance, and design optimization

Any business that manages data can gain advantages from Gen AI. It streamlines processes, increases productivity and fosters innovation. With many breakthroughs already achieved in Gen AI technology, more advancements are expected soon.

However, while Gen AI offers impressive capabilities, it is important to recognize potential concerns and risks.

Understanding the risks

Gen AI is impressive, but not flawless. There can be risks that need to be considered, such as:

Unreliable content

Gen AI can “hallucinate” facts or cite fake or outdated sources. Gen AI doesn’t know facts. It makes predictions based on patterns in its training data, so it cannot verify what it says.

Bias and discrimination

It may reinforce stereotypes or skew results. This can stem from learning biased patterns from its training data.

Privacy and security

Deepfakes, voice scams and phishing are real threats that are becoming more sophisticated and difficult to identify their authenticity. All forms of AI are data-driven and may be used by “bad actors” for misuse.

Ultimately, these risks will impact Gen AI users, and if not addressed, could infiltrate everyday tools and shape people's thoughts.

Regulatory landscape

As of 2025, the United States still lacks a comprehensive federal law covering all aspects of AI. The latest indications suggest that Federal regulations, if any, will prioritize innovation over excessive rules.

However, at the state level, it's a different story. Some states have enacted AI-related laws, many of which focus on data privacy, transparency and bias prevention. Although there isn't a single global AI law yet, numerous countries are working toward one. The European Union is leading with the EU AI Act, the world’s first comprehensive AI regulation that categorizes AI systems by risk and sets different requirements for each.

Although AI legislation is advancing, it’s likely to lag behind the pace of AI technology itself. Whether you’re developing, using, or simply interested in AI, staying informed is essential.

Mitigating AI risks

Security and privacy

Security and privacy are vital, and the most up-to-date and relevant safety measures must be in place for organizations using Gen AI in their workplaces.

- Encrypt everything – data in storage and in transit.

- Limit access – only authorized people should have allowances and access to certain data.

- Consider an Enterprise Data Protection (EDP) contract with Gen AI tools as an option, such as Microsoft Copilot. An EDP helps protect your company’s data, keeps it private, and prevents it from being used to train AI foundation models.

To help navigate Gen AI risks, tools like the AI Risk Management Framework for Gen AI from the National Institute of Standards and Technology (NIST AI 600-1) can serve as a playbook for managing Gen AI risk.

Lastly, to successfully navigate the risks of using Gen AI in your organization, ensure full transparency and accountability, and make sure all outputs from any Gen AI tool are verified for accuracy and correctness.

Governance

To mitigate the occurrence of unreliable content, biased and discriminatory practices, and to ensure data privacy and security, organizations need solid governance.

Some best practices to consider:

- Stay updated: Make sure to be vigilant about relevant AI laws and compliance

- Verify outputs: Be aware that Gen AI tools have limitations, so do your due diligence

- Train employees properly: Teach those in your organization about the ethical, safe use of AI, including company policies.

Gen AI should not be used for:

- Handling private or sensitive information.

- Making final legal, financial or medical decisions.

- Reviewing official policy or contracts – it might miss some legal nuances or introduce the wrong context.

- Performing employee background checks, which may result in unfair or discriminatory outcomes.

- Determining compliance or regulatory requirements may misinterpret the context, provide outdated information or include other inaccuracies.

Summary

Gen AI is rapidly transforming workplaces and beyond, offering efficiency, creativity and convenience. However, it carries risks throughout its lifecycle—from training to deployment. While federal regulations lag, organizations should consider managing these risks through diligence, adequate risk management controls and ongoing monitoring.

When navigated responsibly, Gen AI can be a significant positive game-changer.

Sources

National Cyber Security Centre

National Institute of Standards and Technology-1